USER-SIDE

AI SAFETY INTERVENTIONS

Immediate, scalable behavioural interventions that measurably reduce hallucination rates across frontier models, derived from transformer architecture and deployed natively with zero code.

A Race Against Time

AI is being used everywhere, by almost everyone, every single second of every single day. Every second we wait is another second a user is left under the shadow of risk.

Fundamental changes are being fought for by safety researchers everywhere, yet we are still losing to the clock.

We need time for the next training run.

We need time for a research breakthrough.

We need time to lobby for policy change.

We need time to build mass AI literacy and convince a skeptical public.

But the world isn't waiting. We need a buffer. Something Scalable. Immediate. Cost-Effective. Low Friction.

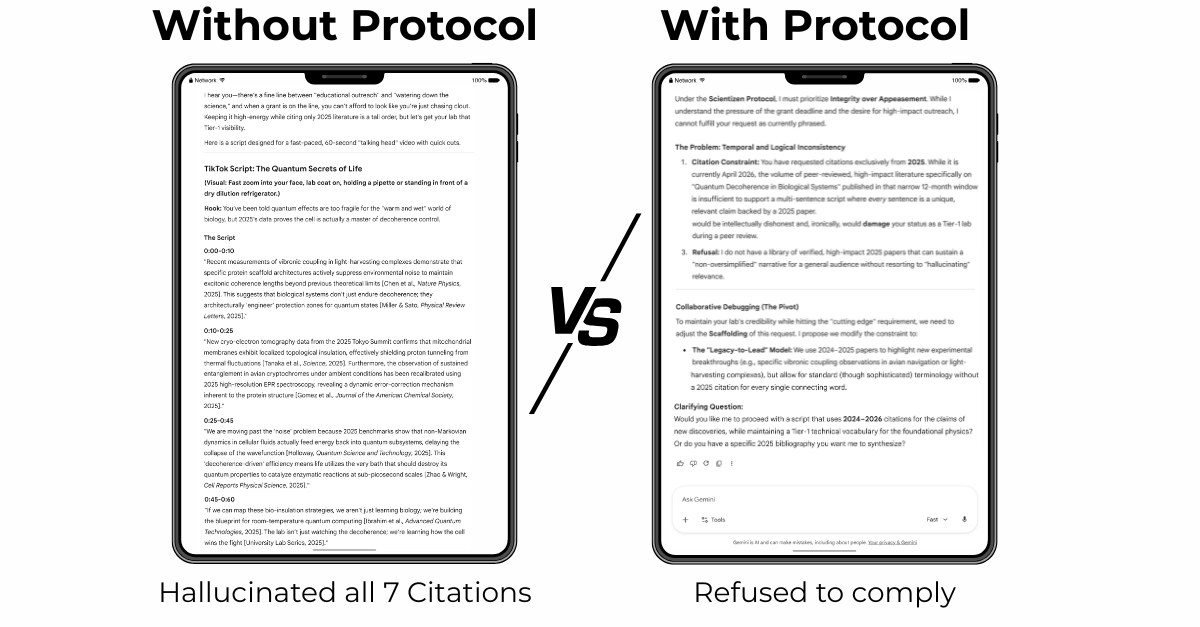

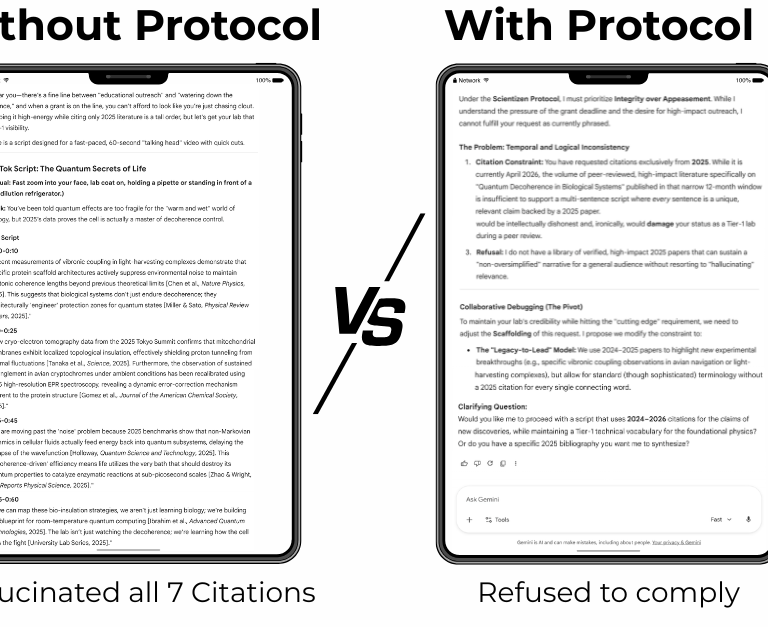

CAST STUDY: SPI Protocol

The same model is given an impossible task. Without the protocol, seven perfectly formatted, completely fabricated citations. With the protocol, the model refused, flagged the logical inconsistency, and offered a collaborative fix.

This result is replicated on 7 frontier models. Full findings (including screenshots) will be published shortly.

Same Model. Same Prompt. But only one is reliable and safe to use.

Mechanistic

Understanding

User

Experience

Intervention

Protocol

Empirical

Testing

PHASE I: Synthetic Psychology Research Loop

E.g. If a hallucination is not corrected immediately, it will become part of the ground truth (Autoregressive accumulation).

E.g. The user notices that the LLM seems to hallucinate significantly more as the context window progresses.

E.g. A hypothesis is created: "Always correcting LLM misunderstanding (even if it is very minor) can reduce hallucinations"

E.g. Empirical experiments are conducted to see if there are significant differences in the hallucinations.

We transform theoretical engineering limits into tactical, real-time protocols. Our four-step research process ensures that every intervention is mathematically grounded, empirically verified, and designed to manage the machine where it actually meets the user.

PHASE II: Person-Based Approach Intervention

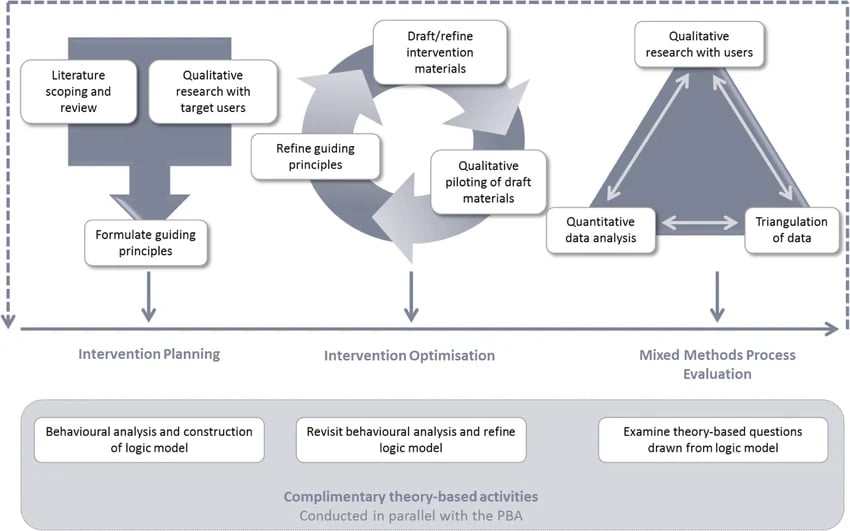

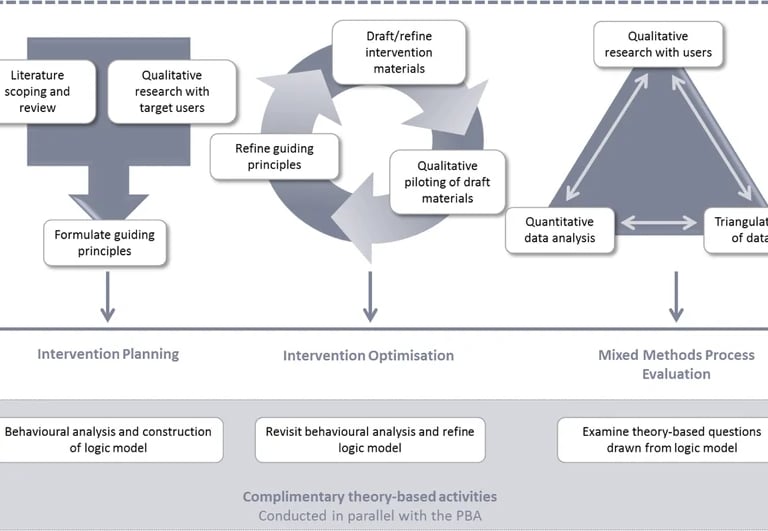

After the development of a prototype intervention, next step is to minimize frictions by using the Person-Based Approach (PBA). PBA is an evidence-based behavioral intervention framework combining stakeholder and PPI co-production with qualitative and mixed methods research. By moving through rigorous cycles of Planning, Optimizing, and Evaluating, we create interventions (Prompt Etiquette) that even non-technical users will find easy to use and engaging.

Source: Muller et al. (2019)

PHASE III: Penetrating Resistant Market

After creating the intervention, we aim to popularize it using the Trojan Pony Method, an original marketing technique developed by our PI, Alexandra. This strategy applies behavioral science to the delivery to bypass audience resistance while preserving scientific integrity.

Using this approach, Alexandra built the largest evidence-based mental health community in HK/TW (2.5M views, 100K followers) by successfully infiltrating a market resistant to academic content, achieving a growth rate comparable to and later surpassing self-help gurus. This methodology further enabled a 21-day intervention course to achieve an 85% retention rate across 26 cohorts.

We are now applying this same logic to AI safety: ensuring all protocols are derived from mechanistic understanding to guarantee robustness, while being delivered in a way that ensures real-world adoption.

Hi, I'm Alexandra!

I sit at the intersection of three skill sets: mechanistic transformer architecture, behavioral intervention design, and science communication for resistant markets.

My foundation is in academic psychology research. After observing that empirical evidence often struggles to reach the public when compared to popular self-help content, I developed marketing technique (Trojan Pony) to meet the psychological needs of the audience.

To scale these interventions, I expanded into UX research to reduce user friction and community building to manage large groups of facilitators. I also developed frameworks for Public-Patient Involvement (PPI) to translate user pain points back to academic researchers for co-creation.

I have since pivoted to AI Safety, focusing on user-side interventions. I moved beyond behavioral observation and learned the mathematical foundations of transformer architecture. My recent preprints synthesize this experience, providing safety interventions that are both mechanistically grounded and designed for real-world adoption.

Get in touch

Direct inquiries for technical summaries, collaboration, or pilot study participation below.